Teachers Deserve Better Access to Educational Evidence

How a new tool and generative AI can change the relationship between teachers and educational evidence

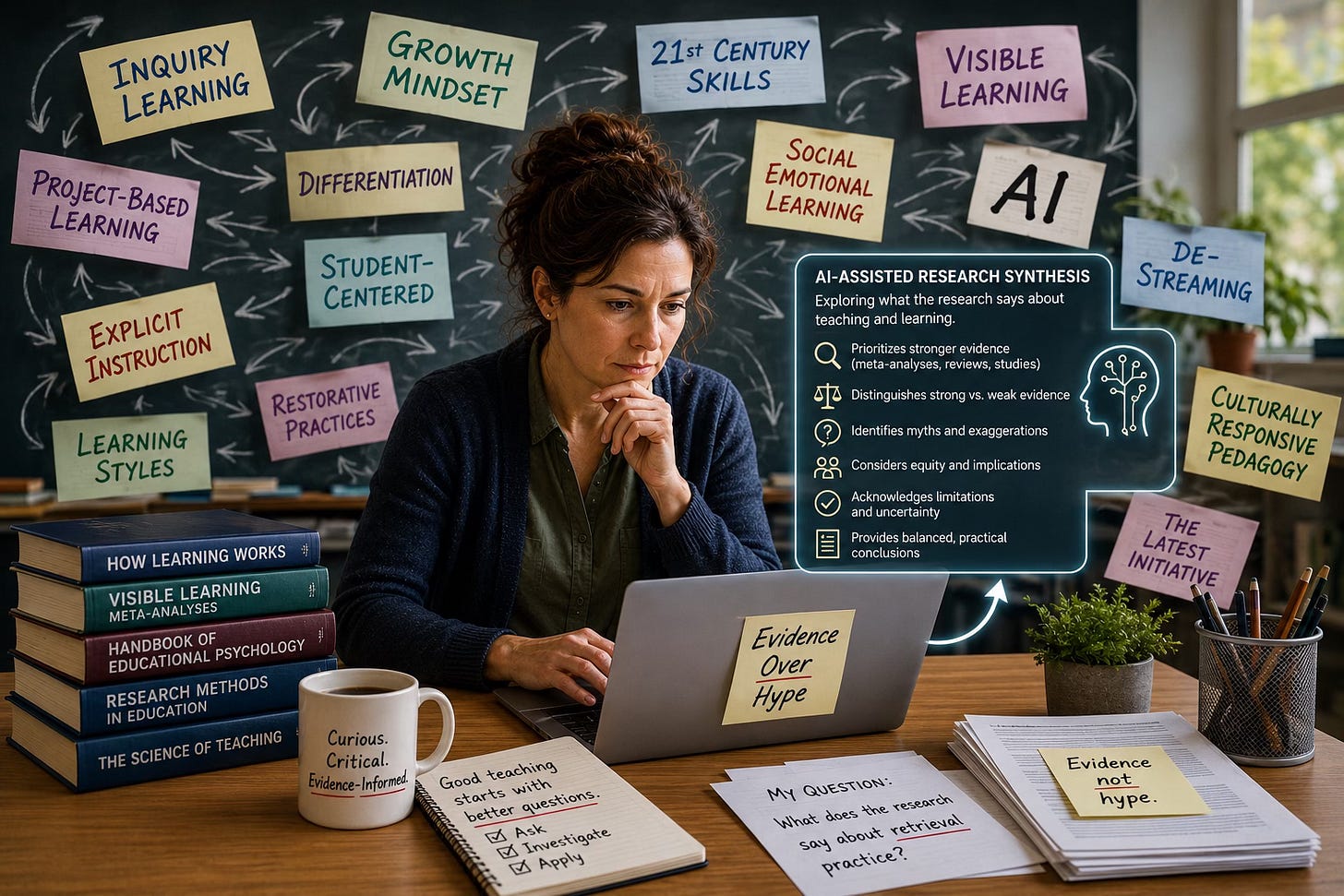

Teachers are surrounded by educational claims.

One workshop tells them that inquiry learning is the key to deeper understanding. Another insists explicit instruction is essential. One expert warns against quizzes because they may increase anxiety. Another says retrieval practice is one of the most effective learning strategies ever studied. Social media is full of confident advice, bold slogans, viral classroom strategies, and sweeping claims about what “good teaching” should look like.

Teachers can end up not knowing who or what to trust. How do you figure out which ideas are actually supported by strong evidence?

One option is to consult the educational research literature itself. But that comes with its own problems. The literature is enormous, fragmented, and often difficult to interpret. Tens of thousands of studies are published every year. Findings are scattered across journals, books, reports, podcasts, professional development sessions, conference talks, and websites. On almost any topic, there are conflicting claims, varying study quality, unresolved debates, and endless oversimplifications.

For many classroom teachers, systematically sorting through all of this isn’t realistic.

Until recently.

We’ve been experimenting with a new tool designed to help teachers quickly and easily explore what educational research says about teaching and learning.

The Evidence Checker

We created The Evidence Checker for teachers who want clearer, more evidence-informed answers to practical classroom questions, such as:

“What does the evidence say about inquiry-based learning?”

“Will regular math quizzes in my grade 5 class increase student anxiety?”

“I teach grade 8. What kinds of classroom management approaches have the strongest evidence behind them?”

“What does the research say about de-streaming?”

The tool inserts the question into a carefully structured prompt that is subsequently pasted into ChatGPT or another generative-AI system. The prompt is specifically designed to push the AI toward evidence-informed, nuanced, and cautious responses.

Instead of generating vague inspirational language or simplistic lists of “best practices,” the AI is instructed to:

critically evaluate the existing research literature,

distinguish between strong and weak evidence,

explain important caveats and limitations,

identify common myths or exaggerations,

and summarize what a reasonable evidence-informed teacher might conclude.

Using the tool is simple:

Go to The Evidence Checker webpage.

Type in a teaching or learning question (we show you some examples).

Press “Copy Prompt.”

Paste the prompt into ChatGPT or another AI system.

That’s it.

For example, a teacher might ask whether learning styles improve academic achievement. Instead of receiving a slogan or simplistic answer, the AI might summarize the weak evidence base for learning styles, distinguish between preferences and learning outcomes, and point the teacher toward approaches with stronger empirical support.

The tool is not perfect. Educational research is often messy and context-dependent. Some educational questions genuinely remain unresolved. And AI systems can still make mistakes. But in our experience, the responses are often far more thoughtful, balanced, and evidence-oriented than the kinds of discussions teachers typically encounter in social media debates, educational marketing, or everyday professional development.

Why this matters

For a long time, teachers have largely had to rely on intermediaries to interpret educational research for them. These intermediaries include consultants, publishers, school leaders, professional development providers, advocacy groups, influencers, curriculum writers, and edu-celebrities. One challenge is that teachers are often asked to adopt educational ideas without clear visibility into the strength of the underlying evidence. The ideas teachers encounter are shaped not only by research, but also by institutional priorities, professional trends, commercial incentives, and ideological commitments. By the time ideas reach teachers, they are often simplified, reframed, selectively presented, or promoted with far more certainty than the underlying evidence can support.

For example, sometimes professional development becomes a vehicle for advancing particular ideological or pedagogical commitments rather than carefully examining competing evidence. In other cases, new initiatives are driven by institutional pressures to demonstrate innovation and change. School systems are often in a near-constant cycle of reform, and leaders understandably feel pressure to introduce new frameworks, programs, and visions for improvement — sometimes before strong supporting evidence is available.

This is one of the reasons why education has historically been so susceptible to instructional churn: fads, oversimplified solutions, and highly confident ideas that spread rapidly despite limited or inconsistent supporting evidence.

Importantly, this is not simply a problem of individual bad actors. Educational research itself is often complex, fragmented, and difficult to interpret. Even well-intentioned people can misread, oversimplify, or overstate findings. But the structure of the system has often left teachers in a difficult position: they are expected to implement new ideas without meaningful access to the underlying evidence, and without clear ways to evaluate the strength of the claims they encounter.

In part, this has been an issue of power and accountability. Those who lead professional development have often occupied the privileged role of interpreting research, while classroom teachers were expected to trust the summaries and presentations given to them. As a result, educational initiatives have often circulated through schools with far less transparency and accountability than would be expected in other evidence-informed professions.

Generative AI may change how teachers interact with educational research.

For the first time, many teachers may now have a practical way to directly interrogate educational claims, compare competing perspectives, ask follow-up questions, and explore what stronger forms of evidence actually suggest. The goal is not to replace professional judgment or outsource thinking to AI. Rather, the goal is to give teachers better access to research conversations that were previously difficult to navigate without specialized expertise or enormous amounts of time.

When access to evidence becomes more widely distributed, the professional conversation may begin to shift as well.

Using the Tool

When you use The Evidence Checker, the initial response from Gen-AI will provide you with a synthesis of the research. But the real power comes from asking follow-up questions. You can dive deeper into the evidence, explore nuances, and connect research to your own classroom.

For example, you might ask:

“What would this look like in my Grade 4 classroom?”

“Can you give me a sample lesson?”

“How strong is the evidence for this claim?”

“Are there meta-analyses on this topic?”

“Which students might benefit the most?”

“How does this compare to explicit instruction?”

That kind of conversation has the potential to shift the profession in important ways. Instead of simply receiving educational ideas from above, teachers may increasingly be able to question claims, compare competing approaches, and make more evidence-informed professional judgments. Research rarely provides simple universal answers, but it can help teachers more carefully evaluate claims, tradeoffs, and likely outcomes.

Teaching is too important to be driven by slogans, trends, and competing ideologies. Teachers deserve better access to evidence, better tools for evaluating claims, and more opportunities to think critically about what actually helps students learn.

We hope this tool is one small step in that direction.

Note: This article was inspired, in part, by Anna Stokke’s article, What to do when “Research Shows” shuts you down (podcast) and Robert Pondiscio’s article, Why is Education So Damn Fad-Prone?

How about the licensing body gives teachers access to research databases and journals so they can look for themselves? BCBAs and other professionals get such access as part of their credentialing. How about we stoop pushing fads and gimmicks at teachers and give the actual research so they can use the actual research to teach in a research-based way.

The research to practice pipeline breakdown is so real! Teachers don't have time, academics live in the ivory tower, and to your point--there just isn't a reliable translator to provide a trustworthy and detailed enough summary that is actually relevant. Looking forward to using your tool! This is the stuff we should be using AI for.